Root-cause investigation at 200mm specialty fabs has a timing problem. When a yield excursion hits, the standard process is to pull wafer maps from the last 48 hours, load them into a review station, and have a yield engineer stare at spatial patterns until something clicks. On a good week, that takes 3 days. On a bad week, the fab is still running product while the team debates whether the edge-ring distribution they're looking at is a resist coat issue or a CMP artifact. We've seen cycles stretch to 7 days at analog and power fabs carrying 40,000 to 60,000 wafer starts per month. That's expensive ambiguity.

The core problem isn't data availability. Most 200mm fabs running KLA Surfscan or Onto Innovation inspection tools have more defect data than they can process manually. The problem is interpretation speed. Human pattern recognition is good, but it's also slow, inconsistent across shifts, and doesn't scale when you're cross-correlating defect signatures from 4 to 6 inspection steps across a 15-mask-level flow.

Why Manual Pattern Reading Breaks Down

Defect maps carry spatial information that encodes process history. An edge-ring distribution suggests something different from a center-cluster. A scratch-linear pattern points to mechanical contact. A radial-spoke pattern usually traces back to spin-coat non-uniformity or chuck contamination. Experienced yield engineers know this. The issue is that recognizing a known pattern type and then linking it to a specific process step requires holding a lot of context simultaneously: what tool ran this lot, when it last had a PM, what other lots showed similar patterns that week, what the spatial correlation looks like across wafer-level vs. lot-level.

Manual review handles one wafer at a time. The brain pattern-matches well for archetypes, but deteriorates when the defect map is a composite of two overlapping signatures, or when the primary signal is subtle and sits at the 70th percentile of historical density. In our experience, the miss rate on composite signatures during manual review runs at 25 to 40% compared to automated spatial correlation on the same data set.

Short shifts make this worse. An engineer who reviewed 30 maps in the morning hands off to someone who reviewed none. The institutional knowledge doesn't transfer. Automated signature matching solves that continuity problem entirely. Every shift. Every handoff.

How Signature Libraries Work

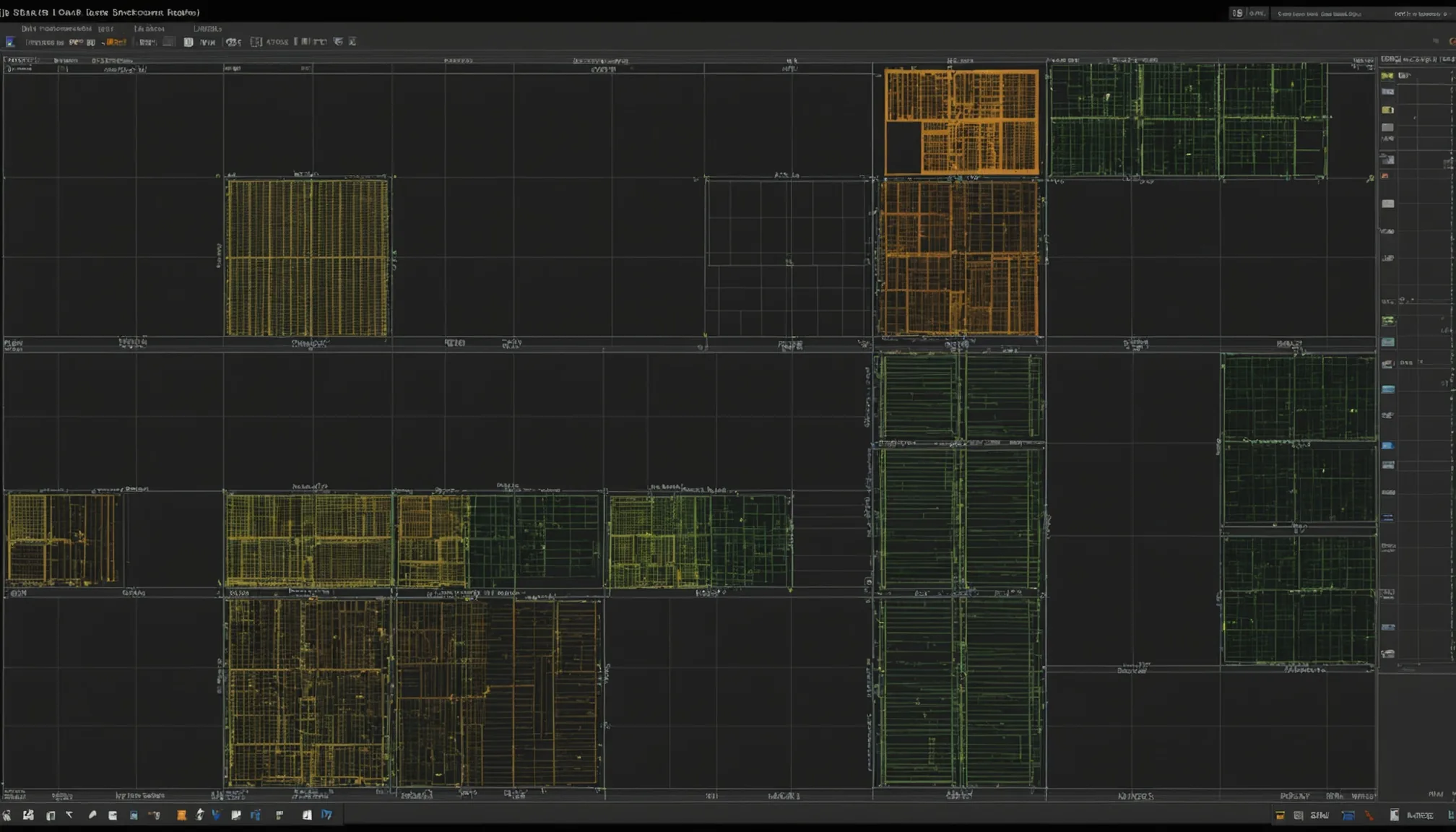

A defect signature library is a structured repository of pre-categorized wafer map patterns, each annotated with the defect class, spatial distribution type, associated process steps, and historical root-cause resolutions. Think of it as a lookup table, but one that does spatial matching rather than exact string comparison.

At a practical level, the library stores reference signatures as normalized spatial probability distributions. When a new inspection output arrives, the system computes a similarity score against each library entry using methods like Zernike moment decomposition, kernel density estimation overlap, or grid-cell correlation, depending on the implementation. Signatures above a match threshold are ranked and returned with confidence scores.

The four primary categories that cover the majority of 200mm specialty fab defects are:

| Signature Type | Spatial Characteristic | Common Root Cause Areas |

|---|---|---|

| Edge-ring | Annular band 5-15mm from wafer edge | Resist coat, edge bead removal, CMP edge exclusion |

| Center-cluster | Radially symmetric concentration at wafer center | Spin coat, chuck flatness, deposition uniformity |

| Scratch-linear | Linear or arc-shaped track across die rows | Handling, CMP pad contact, wafer transport |

| Radial-spoke | Radial arms extending from center outward | Spin coat non-uniformity, chuck contamination |

A mature library at a 200mm MEMS or power fab typically contains 200 or more discrete signature entries. Some fabs running complex BiCMOS or SiGe flows maintain libraries exceeding 350 entries. The library grows over time as new failure modes are characterized and added.

KLA and Onto Data Formats in Practice

The two dominant inspection platforms at 200mm specialty fabs are KLA (historically Tencor, now unified under KLA Corporation) and Onto Innovation (formerly Nanometrics and Rudolph Technologies combined). Both output defect data in formats that carry spatial coordinates, defect classification codes, and inspection metadata. Fact: the formats are not identical, and that's where a lot of manual workflow overhead historically lived.

KLA systems output KLARF (KLA Results File) format. KLARF is structured ASCII with a defined grammar: header block, wafer info block, defect record block. Each defect record includes X/Y coordinates in wafer-level microns, defect class code, rough size estimate, and image reference ID. KLARF has been around since the early 1990s and is well-supported by most yield management systems. Version 1.8 is the most common variant in active use at 200mm fabs today.

Onto systems primarily output in their proprietary format (historically SECS/GEM stream or CSV-variant), though many current tools support KLARF export as well. The practical issue is that coordinate reference frames, defect class taxonomies, and size normalization differ enough between KLA and Onto outputs that direct comparison requires a normalization step. Fabs running both inspection tool brands need a data harmonization layer before signature matching can occur across the combined data set.

This normalization is typically handled in the yield management system layer, whether that's Klarity Defect, PDF Solutions, or an in-house tool. The output is a unified wafer map in a common coordinate space, with defect classes remapped to a shared taxonomy. That unified representation is what flows into the signature matching engine.

Practical Workflow for Yield Engineers

Here's the thing: automated signature matching doesn't replace yield engineer judgment. It accelerates the triage step so engineers can spend their time on root-cause confirmation rather than pattern identification.

A realistic workflow at a fab that has deployed signature matching looks like this:

- Inspection output ingested within minutes of lot completion. No manual export step, no waiting for the morning review meeting.

- Automated match against library runs in under 2 minutes per wafer. Top 3 candidate signatures returned with match scores.

- Alert triggered if match score for a known excursion signature exceeds threshold, or if no match is found (indicating a novel pattern that needs characterization).

- Engineer reviews candidates, confirms the top match or escalates for full root-cause investigation. Confirmation typically takes 15 to 30 minutes instead of the 6 to 12 hours a manual review cycle requires.

- Resolution logged back into the signature library, improving future match accuracy for similar patterns.

The feedback loop is where the real long-term value accumulates. Each confirmed match adds a data point that strengthens the library's confidence model. Over 12 to 18 months of active use, in our data, match accuracy for known signature types improves from roughly 72% at initial deployment to above 91%.

Spatial Correlation Methods Worth Understanding

For yield engineers who want to understand what's happening inside the matching engine, a few spatial correlation methods dominate current implementations.

Grid-cell correlation divides the wafer into an N x N grid (typically 32x32 or 64x64) and computes defect density per cell. Correlation between the incoming map and library entries is computed as a Pearson or Spearman coefficient across the grid. Fast and interpretable, but sensitive to absolute density differences between lots at different inspection levels.

Zernike moment decomposition represents the spatial distribution as a set of orthogonal polynomial coefficients. Because Zernike polynomials are defined over a circular domain (matching the wafer shape), they capture rotationally symmetric patterns like edge-rings and center-clusters with high accuracy. Insensitive to lot-to-lot density variation. More computationally expensive, but not prohibitively so on modern hardware.

Kernel density estimation (KDE) overlap generates a continuous probability density from the discrete defect coordinates, then computes the intersection area between the incoming map's KDE surface and the library entry's KDE surface. Handles sparse defect maps well. Resistant to noise. This method is our preferred baseline for early-step inspection data where defect counts per wafer are often below 50. Simple as that.

Real implementations often combine methods: grid-cell for fast initial screening to filter the library down to 10 to 20 candidates, then Zernike or KDE for high-resolution scoring on the shortlist.

What This Changes for Root-Cause Investigation Time

The direct impact is on cycle time. Fabs that previously ran 3 to 7 day root-cause investigation cycles for pattern-driven yield excursions have brought that down to same-shift or next-shift resolution for known signature types. That's not a marginal improvement. At a fab running 50,000 wafer starts per month with a 2% yield hit during an undiagnosed excursion, each day of delayed root cause costs between $150,000 and $400,000 in lost die, depending on product mix and die value.

Unknown signatures still require full investigation. Honestly, that's fine. The point isn't to eliminate engineering judgment; it's to reserve it for the cases that actually require it. Automated matching handles the 70 to 80% of patterns that are recognized types. Engineers focus their attention on the 20 to 30% that are novel, ambiguous, or high-stakes.

200mm specialty fabs often operate with lean yield engineering teams, 3 to 5 engineers covering round-the-clock operations. That's where fast automated triage matters most. Each engineer carries more productive diagnostic capacity when the pattern recognition step runs in the background rather than consuming the first half of every investigation cycle.

Practical note: signature library initialization is the gating step for new deployments. Fabs going live with fewer than 80 to 100 well-characterized library entries will see low match rates early on and risk under-trusting the system. Investing 4 to 6 weeks in systematic library population, using historical KLARF archives, pays back within the first quarter of operation.

The underlying data has always been there. KLA and Onto systems have been generating spatially precise defect maps for decades. What's changed is the infrastructure to match, categorize, and act on that data faster than any manual process can. For analog, power, and MEMS fabs running complex specialty flows, that speed difference is increasingly where yield engineering capacity either gets consumed or gets multiplied.