Most specialty fabs running both KLA and Onto Innovation inspection tools treat them as parallel data streams that never meet. That's a mistake. In our experience, the highest-value root-cause cycles we've closed came from combining those two streams into a single spatial view. The workflow isn't trivial, but it's repeatable once you've done it a few times.

Why Two-Vendor Data Creates a Gap

KLA and Onto Innovation both export defect data, but the formats reflect different design philosophies. KLA systems (KLARF-based, typically .001 files) encode defect records as fixed-width fields with die-level offsets and a coordinate system anchored to wafer flat or notch. Onto Innovation Atlas tools export in a different structure, often CSV-derived or proprietary XML, with their own coordinate reference frame and defect classification taxonomy.

Here's the thing: even when both tools inspect the same wafer, their reported (X, Y) coordinates for the same physical particle will differ. The delta is not random noise. It comes from three sources: notch-alignment offset between load ports, die-origin convention differences, and stage calibration drift unique to each tool. In fabs we've worked with, the uncorrected spatial offset between KLA and Onto coordinates for the same defect can run anywhere from 5 microns to over 40 microns on a 200mm wafer. At 40 microns, a naive overlay shows two separate defect populations where there's really one.

Coordinate Alignment: The First Technical Step

Before any correlation analysis, you need a coordinate transform that maps one tool's reference frame onto the other's. The standard approach is to run a known reference wafer, typically a standard particle deposition wafer from your internal metrology lab, through both tools on the same day. Capture the (X, Y) coordinates of 50 or more well-separated reference particles from both outputs, then compute an affine transform: translation, rotation, and scale correction.

In practice, rotation is almost always the dominant term. Stage theta differences between two KLA and Onto load ports in the same bay can introduce a 0.05 to 0.12 degree systematic rotation. That sounds small. At the wafer edge, 0.1 degrees at 95mm radius translates to roughly 165 microns of positional error. Not small.

Once you have the affine transform matrix, apply it to all defect coordinates from the secondary tool before you do any overlay or density-map computation. Store the transform as a versioned calibration artifact, and re-run the reference wafer any time either tool undergoes PM or stage replacement. We've found that recharacterizing after every quarterly PM on the Atlas system prevents silent drift from accumulating.

Data Format Normalization

After coordinate alignment, the next barrier is schema normalization. A practical approach is to build a thin parser layer that maps both source formats to a common internal defect record schema. At minimum, that schema needs:

- Wafer ID (lot + slot, normalized to your MES format)

- X, Y in a single canonical coordinate system (post-transform for the secondary tool)

- Defect class or bin code (requires a class-mapping table since KLA and Onto classification taxonomies differ)

- Tool ID and inspection recipe name

- Timestamp to the inspection event

The defect class mapping table is where fabs usually take shortcuts, and it costs them later. KLA might call a 0.3-micron scattering defect "LPD" while Onto classifies the same morphology as "Point Defect, Class 3." If you map them incorrectly when building density overlays, you'll generate false spatial correlation. Worth the upfront effort to get this right. One hour building the table saves 20 hours of head-scratching later.

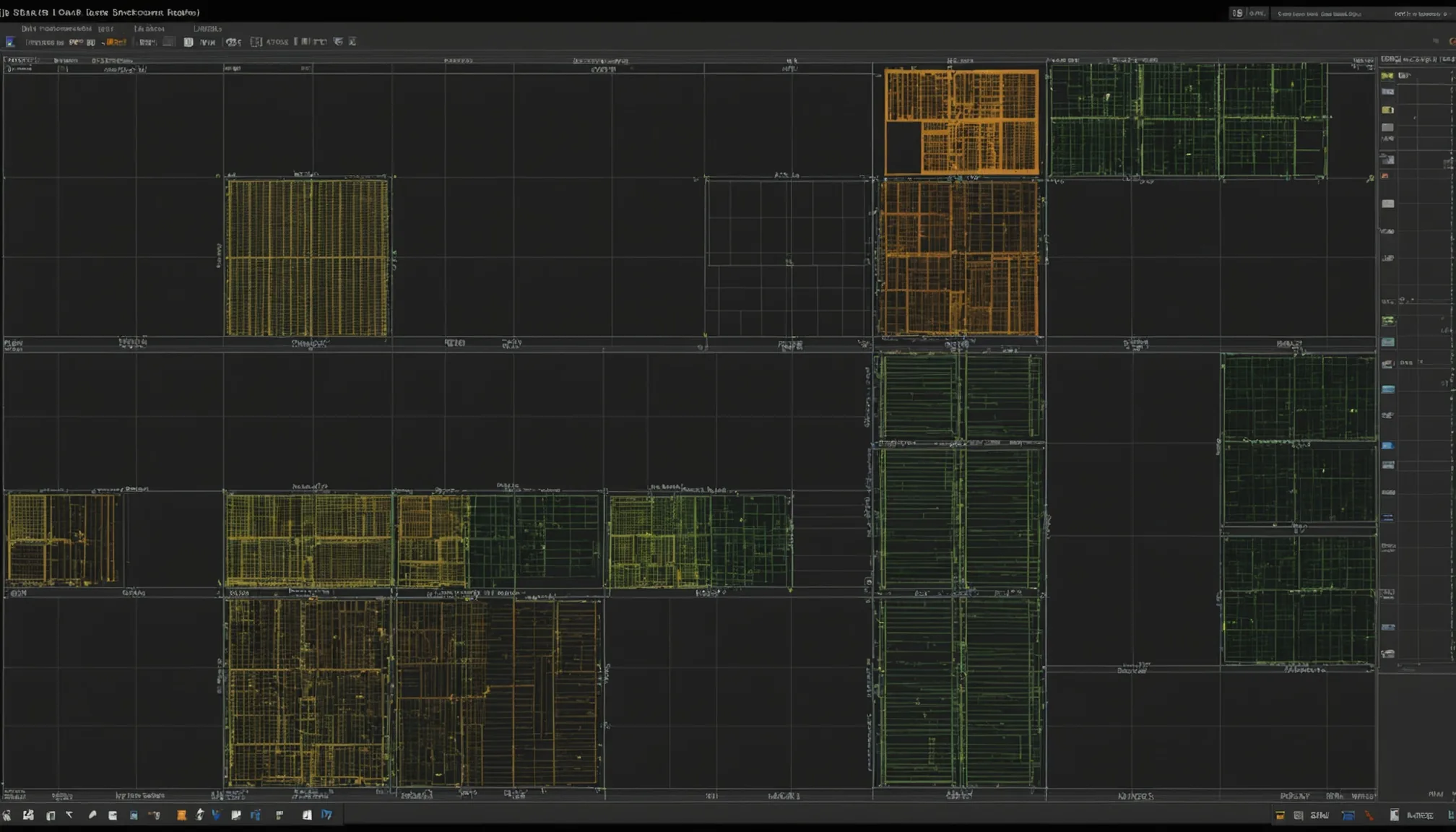

Combined Defect Density Maps

Once records are in a common schema, you can build combined spatial density maps. The most useful representation is a per-die density heatmap that aggregates defect counts from both tools simultaneously. Bin the wafer surface into a grid, typically 2mm x 2mm cells for a 200mm wafer, and count defects from both sources per cell.

What this reveals that single-tool maps miss: when the KLA tool catches a ring pattern at 60mm radius and the Onto tool catches a cluster at the center, you're looking at two different process signatures. But when both tools show coincident density peaks at the same spatial location, you've just shortened your excursion hypothesis list dramatically. In our data, coincident multi-tool density peaks have a roughly 3 to 4 times higher rate of tracing back to a single process event (particle contamination, scratch, or film-deposition non-uniformity) compared to single-tool peaks.

How Multi-Tool Correlation Narrows the Excursion Source

The diagnostic logic is straightforward once the data is clean. Each tool in your inspection sequence covers a different process window. KLA macro inspection might run post-CMP. Onto bright-field runs post-deposition. If a defect pattern appears in both datasets and the spatial distribution is correlated, the contamination source is upstream of both inspection points. If the pattern appears only in the post-deposition Onto data and not in the prior KLA step, the event happened between those two process steps. Simple in principle.

In practice, we've seen this logic close excursion root-cause cycles in 6 to 8 hours instead of the 2 to 3 days that single-tool triage takes. The key is having the alignment and normalization infrastructure in place before the excursion hits. Trying to build it during an active excursion, when engineering is already stressed and under pressure, is not the time. Trust us on this.

One structural pattern worth building: a cross-tool correlation score per lot, computed as the Pearson correlation between the two per-die density vectors. A score above 0.7 flags the lot for coordinated review. A score near zero means the defect populations are spatially independent, which itself is diagnostic information pointing to tool-specific or step-specific sources.

Typical Fab Workflow for Dual-Vendor Inspection

Here's how a fab using both KLA and Onto tools can structure the operational workflow:

- Inspection trigger: Both tools log defect files to a shared network path or FDC (fault detection and classification) system on completion of each wafer inspection job.

- Parser ingest: An automated parser (Python, Perl, or whatever your MES shop prefers) reads new files, applies the coordinate transform to the Onto output, normalizes both to the common schema, and inserts records into a defect database.

- Correlation computation: At lot completion, a scheduled job computes the per-die density vectors for each tool, calculates the cross-tool correlation score, and flags outlier lots.

- Engineer review queue: Lots above the correlation threshold, or lots where either tool triggered a bin-count alarm, appear in a daily review queue. The engineer sees both maps side by side and the correlation score.

- Root-cause branching: High correlation → trace to common upstream process step. Low correlation with single-tool alarm → focus on tool-specific source (chuck, optics, load-port particles).

This workflow doesn't require expensive third-party yield management software. A moderately experienced Python developer and a relational database can implement the core of it in two to three weeks. The value comes from the process discipline, not the tooling cost.

Practical Notes Before You Start

A few things we've learned the hard way:

Calibration drift is faster than you think. Re-validate your coordinate transform every 90 days minimum, not just after PM events. Stage bearing wear in high-throughput environments can shift the transform coefficients measurably within a quarter.

Don't aggregate across recipe changes. If either tool changes inspection wavelength, threshold, or scan mode, your historical density maps are no longer comparable. Version your recipes and break the correlation dataset at recipe change boundaries.

Nuisance defect filtering matters more in combined analysis. A nuisance defect rate that's acceptable in single-tool analysis can generate spurious spatial correlations when two noisy datasets get combined. Set your nuisance filter thresholds conservatively before building the combined density maps.

The Payoff

Running cross-tool correlation analysis isn't glamorous infrastructure work. But fabs that have it in place consistently outperform those that don't on time-to-root-cause during excursions. The data is already there on both tools. The work is in making it speak the same language.

We've built tooling specifically for this problem because we kept seeing fabs lose days on excursions that the data, already collected, could have answered in hours. The coordinate alignment step alone, once automated, reduces the manual analysis burden by roughly 60 to 70 percent on a typical multi-tool excursion investigation. That's not a small number when you're running production lots through a constrained specialty node.

Start with the reference wafer calibration. Everything else follows from that.